It is early 2026, and the intersection of AI and security has moved past the "hype" phase into a high-stakes arms race. We are currently seeing a massive shift from simple chatbots to "Agentic AI"—autonomous systems that can take actions on your behalf—which has created entirely new categories of risk. There are different types of AI agents based on reasoning complexity, functional role, system architecture and maturity level.

1. Categorization by Reasoning Strategy

This defines the "cognitive" depth of the agent—how it processes information and makes decisions.

- Reflexive Agents: The simplest "Trigger-Action" bots. They follow "if-then" rules (e.g., a thermostat or a basic auto-responder).

- Chain-of-Thought (CoT) Agents: Use LLMs to break down a prompt into a linear sequence of steps before acting.

- Reasoning-First Agents (ReAct/Tree-of-Thought): These agents "think" before and during execution. They use patterns like ReAct (Reason + Act) to observe the result of an action and adjust their next step dynamically.

- Reflective Agents: These include a "critique" loop where the agent reviews its own work (self-correction) to ensure quality before delivering a final result.

2. Categorization by System Architecture

This defines how the "brain" is structured and whether it works alone or in a team.

| Category | Description | Best Use Case |

| Single-Agent | One central model handles reasoning, memory, and tool use. | Simple, linear tasks (e.g., summarizing an email). |

| Multi-Agent (MAS) | A "Crew" of specialized agents (Researcher, Writer, Reviewer) collaborate. | Complex projects (e.g., building a software feature). |

| Hierarchical | A "Manager" agent delegates sub-tasks to "Worker" agents. | Enterprise workflows requiring high oversight. |

| Swarm / Peer-to-Peer | Agents collaborate as equals without a central manager. | Dynamic troubleshooting or creative brainstorming. |

3. Categorization by Functional Role

Agents are often classified by the specific "job" they perform in an ecosystem.

- Workflow Copilots: These live inside SaaS tools (like Slack, Salesforce, or Excel) to automate multi-app tasks.

- Research & Retrieval Agents: Advanced RAG (Retrieval-Augmented Generation) systems that autonomously find, verify, and cite data sources.

- Computer-Use Agents: Agents that can interact with Graphical User Interfaces (GUIs) just like a human—clicking buttons, filling forms, and navigating websites.

- Governance & Security Agents: "Guardian" agents that monitor other AI agents for policy violations, hallucinations, or security risks.

4. Maturity Spectrum

Enterprises now categorize agents based on their level of autonomy (the "Level 1-5" model):

- Level 1: Task Automation – Assisted automation of routine, rule-based tasks.

- Level 2: Semi-Autonomous – The agent makes task-level decisions but requires a human to orchestrate the overall workflow.

- Level 3: Highly Autonomous (Bounded) – The agent manages end-to-end processes independently but pauses at "human-in-the-loop" checkpoints for high-risk approvals.

- Level 4: Fully Autonomous – The agent handles error recovery and strategy adjustments without human intervention.

- Level 5: Ecosystem Intelligence – Multiple autonomous agents across different companies interact and negotiate with each other.

Agent 2 Agent (A2A) and Model Context Protocol (MCP)

| Feature | MCP (Model Context Protocol) | A2A (Agent-to-Agent) |

| Primary Goal | Connecting an AI to Tools & Data | Connecting an AI to Other AIs |

| Analogy | A USB port for your computer | A phone call between two people |

| Interaction | Vertical (Agent → Database/API) | Horizontal (Agent ↔ Agent) |

The biggest trend in recent times is the emergence of AI agents as the new "insider threat." Companies are deploying autonomous agents to handle procurement, coding, and customer service. Major operators of Agentic AI include Microsoft, Google Cloud, Amazon, Salesforce. There are multiple frameworks for building Agentic AI as well.

AI agents are more than search engines. They are considered as ‘digital employees’. While a standard AI (like a basic chatbot) waits for a prompt to give an answer, an agent is given a goal and then decides which steps to take to reach it. This shift from "answering" to "acting" happens through two specific mechanisms: Planning (for Autonomy) and Function Calling (for Access). When an AI agent is given a goal, it decomposes the goal into subtasks using reasoning. It gets access to various functions through APIs.

Because agents have autonomy and access, they are high-value targets. They are often associated with risk such as:

- Privilege escalation

- Indirect prompt injection

- Data exfiltration - Data aggregation and inference

- Faster Mean Time to Exfiltrate

While it is absolutely necessary to craft and implement strong access control mechanisms, ensure separation of duties, have sandboxed ephemeral environments, and always involve humans to review and approve sensitive - high stake actions, have contextual network security and DLP, it is also highly beneficial to combine the traditional security approaches with User Entity Behavioral Analysis (UEBA).

In this context, the "Entity" being analyzed is the AI agent itself. Since agents function as autonomous "non-human identities," UEBA creates a behavioral fingerprint for them to detect when they have been compromised by a prompt injection or have gone rogue.

Netskope UEBA use cases

Agents are often identified using service principals and unique agent IDs. Once an Agentic AI is assigned an identifier (email id format) within SaaS applications, they would be listed and managed by Netskope's SaaS app connector. The pre-requisite here is that Netskope should have a supported connector for the SaaS app under consideration. Apart from UEBA, we would also be able to apply contextual DLP, threat protection, and retroactive scans of SaaS apps. Contextual DLP can be applied using predefined or custom DLP rules to alert or prevent sensitive data movement

Netskope’s Behavior Analytics tool looks at patterns of human behavior, and then applies machine learning algorithms and statistical analysis to detect meaningful anomalies from those patterns—anomalies that indicate potential threats. Instead of tracking devices or security events, behavior analytics tracks users and entities. Analyzing activities will help detect insider threats, compromised accounts, compromised devices, rogue insiders, data exfiltration, lateral movement, anomalous behavior, and advanced persistent threats.

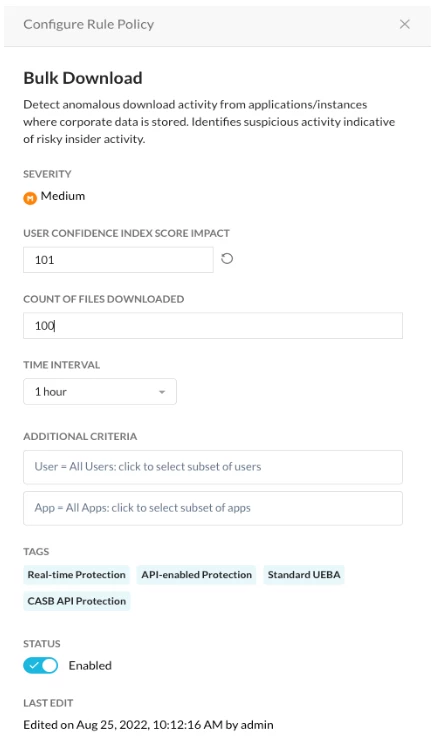

Risky Agentic AI activities that can be detected and flagged by Netskope UEBA include:

- Spike in downloads from managed applications,

- Spike in downloads with DLP policy violations from managed applications,

- Spike in uploads to unmanaged applications,

- Spike in uploads with DLP policy violations to unmanaged applications,

- Potential corporate data movement,

- Potential corporate data movement with DLP policy violations,

- Spike in documents shared outside the organization from managed applications,

- First access to an application by the AI agent,

- First access to an application for your organization,

- First access for S3 bucket,

- Spike in files deleted by the agent,

- Spike in failed log ins attempts.

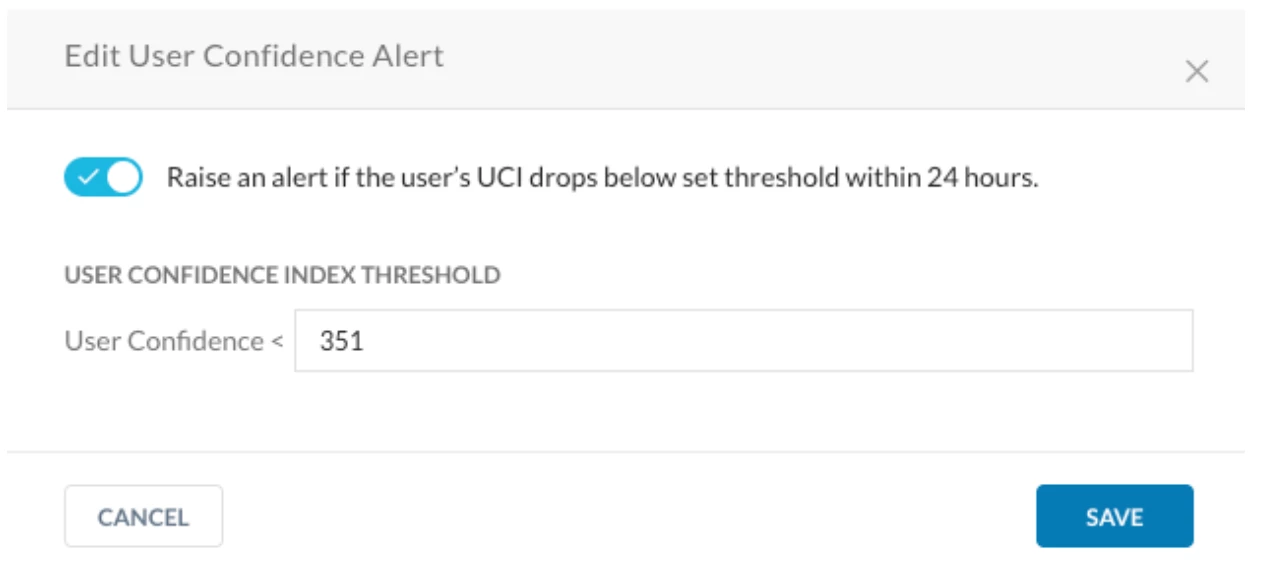

Netskope’s UEBA engine manages and maintains a score called ‘User Confidence Index’ (UCI) for every user/entity.

The UCI score, along with other metrics provide more context to the UEBA detections to help Security administrators and practitioners take timely appropriate action against Agentic AI agents that end up being risky to the organization.

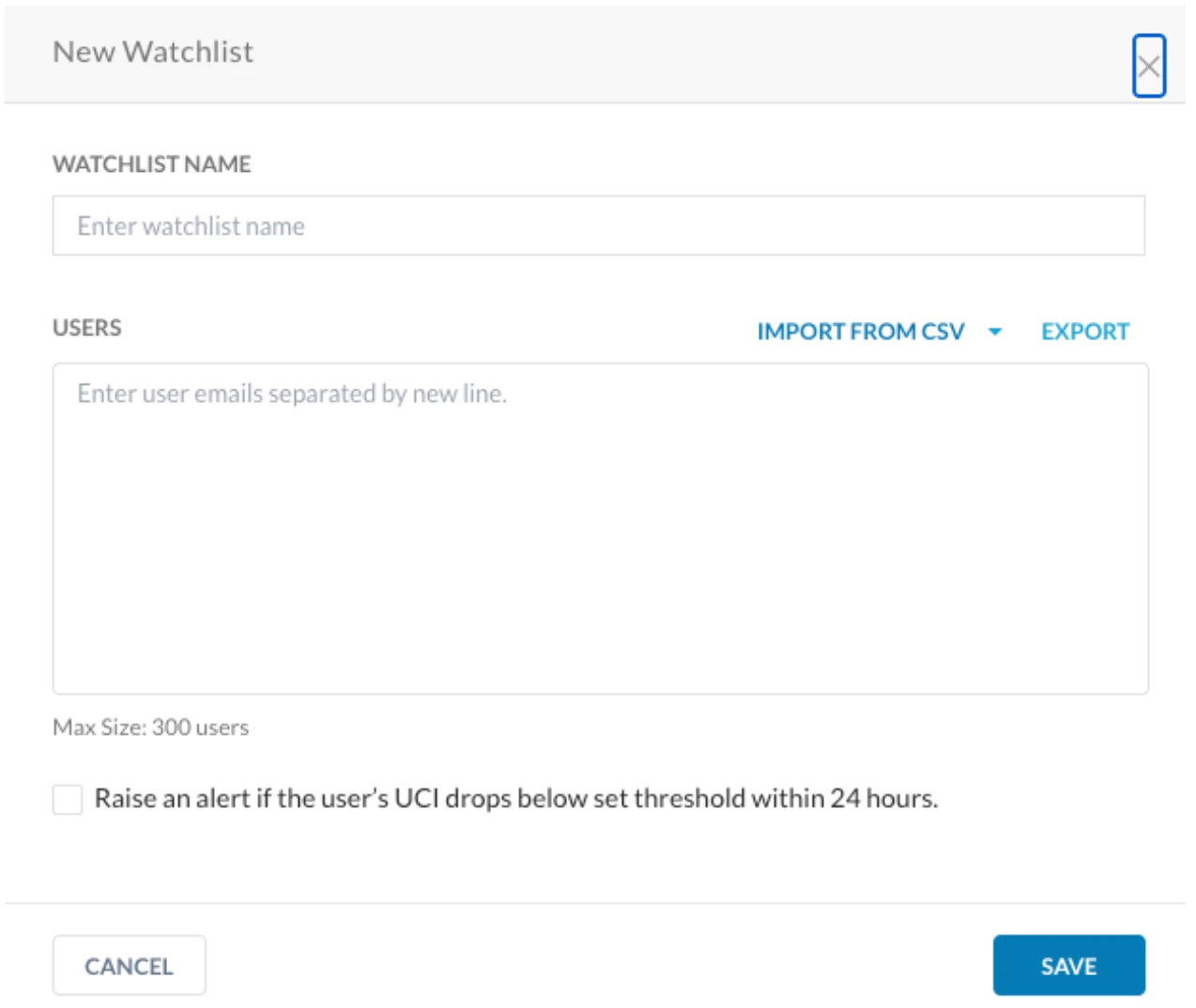

Policies and watchlists associated with UEBA policies can be tuned and tailored to fit specific use cases and detection scenarios.

Watchlists can be configured as shown below:

It is also possible for UEBA to consume events and alerts from other security tools, and for UCI scores to be exported to other platforms. More details regarding UEBA integration with third party tools can be found at https://docs.netskope.com/en/third-party-integrations-with-advanced-ueba

By treating AI agents as digital insiders, UEBA looks for bad intent and acts as a 'Security Camera' for the AI age. While firewalls check the door, UEBA watches the hallways to see if the people (and agents) inside are acting like they’re supposed to